Future technology: a force for good or a source of fear?

Cyber-security expert Colin Williams explores the growing interaction between humans and technology.

By Grant Feller, September 2015

If we truly want to understand the potential future interaction between humans and technology, we first need to understand what prevented Nikita Khrushchev and John F Kennedy from blowing the world to smithereens at the height of the Cold War.

If we truly want to understand the potential future interaction between humans and technology, we first need to understand what prevented Nikita Khrushchev and John F Kennedy from blowing the world to smithereens at the height of the Cold War.

We need also to understand the artistic principles of the Enlightenment, the philosophy of Ludwig Wittgenstein, how primitive societies adapted to the widespread use of the written word, why the Nazis failed to capitalise on their advanced computer research and left Alan Turing to pick up the plaudits and why the phrase “Open the pod bay door, Hal” is one of the most memorable in cinema.

Because the future, says Colin Williams, an honorary fellow at the Cyber Security Centre, is indelibly attached to, influenced and shaped by the past. And to appreciate (rather than simply distrust) the awesome power of science, we also need a fine appreciation of the nuances of the arts, models of human behaviour and a philosophical mindset ready to explore concepts of fear, power and collaboration.

“Sometimes we see the world in a very monochrome, binary way,” says Williams, a historian, scientific adviser and futurologist. “For instance, technology is seen as good. But advanced, sentient machines that can feel, think and mimic human behaviour and thus one day rise up and take control of society are bad. How on earth do we reach such conclusions? Because we fear change. We’re naturally suspicious of advances, especially in science. We like our rules, regulations and order. We don’t relish disruption. Yet science has always disrupted society and society has always adapted. And let’s not forget, it has always advanced, too. Certainly, we’re in the midst of one of the most exciting periods of scientific change that the human race has ever experienced, yet it’s not something we should fear.”

It's easy to get swept up by Colin's incisive enthusiasm, and his sweeping theoretical leaps in time and genre.

“I think the debate comes at a crucial moment in our relationship with computers,” Williams says. “The world changes, human interactions change, societal demands change and there currently seems to be a disconnect in the public eye between what’s going on and what, in some eyes, could happen. The narrative is about computer as destroyer but that’s so one-dimensional. The computer might destroy us, on the other hand it might work with us, it might happily do our bidding, it might propel mankind to even greater feats, it might help to inspire industries that would create jobs rather than just make us all redundant. Yet we focus instead on destruction. Why?”

The reasons, he believes, are extraordinarily complex but have their roots partly in religion (humans attempting to mimic a creationist God are doomed), and the use of the word “revolution” when it comes to discussing technology. As if society, threatened by such a movement, is unable to stand in its way. Mankind becomes merely a passive observer, and our social structures and values become driven by technology – what’s known as technological determinism.

“I believe we need to see technology through a different lens,” says Colin. “We need to embrace its ability to bestow upon us a sense of enlightenment and engagement. Instead, we seem obsessed with the concept of subordination – that we might lose the ability to control these machines, robots, sentient beings, whatever you want to call them and instead they will be in charge of us.

“I believe we need to see technology through a different lens,” says Colin. “We need to embrace its ability to bestow upon us a sense of enlightenment and engagement. Instead, we seem obsessed with the concept of subordination – that we might lose the ability to control these machines, robots, sentient beings, whatever you want to call them and instead they will be in charge of us.

“We’re used to living in a deterministic world through a state of command and control. But that’s not the case any more. The system which we have created for ourselves is not defined by those parameters any longer, it is iterative and we mustn’t fear that process. For instance, we have labelled this new era of robotic intelligence “artificial”, a horrible term, as if natural intelligence is wholesome and that what we have replicated is evil, distrustful and unreal, instead of being something that is stronger and faster, and perhaps can imbue us with those same qualities.”

Fear has become the defining emotion when contemplating a technologically advanced robotic future in which hierarchies are, potentially, reversed. But to know the future, Williams believes we need to understand the past, understand why listening to the radio didn’t destroy our love of reading, as was supposed at the time, why rational thought and reasoned behaviour has so far prevented a nuclear holocaust, why dealing with something that we may perhaps not be able to control entirely need not mean the end of humanity.

“That’s why I think my role as an historian within this field of cybernetics is so crucial,” Williams says. “Technology is a manifestation of how science fits into society, not controls it. It's the product of science and our imaginations, and those imaginative states are influenced by philosophical constructs: why we are, where we’ve been, how we’ve adapted. Understanding our past is crucial to knowing our future.”

In his role as development director at IT security specialists SBL, he often comes to view the technology industry through the prism of history. ”I came into the computer world decades ago as a man with an academic background who also understood sales – why we wanted these machines. Not just what they could do but what relationship we as humans could have with them. It helped me to formulate, within a business environment, a long-term strategy for the ongoing value of technology and for its potential. Yet still we seem to be obsessed not with benefits or indeed a form of advanced liberation, but being killed or at the very least being enslaved.”

And here we encounter another history lesson hinting at a key stimulus of our current fears. The word robot first came to be used in a 1920 science-fiction play by Czech writer Karel Capek, in which artificial creatures are happy to do humans’ bidding until a hostile robot rebellion leads to the extinction of the human race. The word robot has its roots in Slavic words for slave: rab, rob and rabu. The concept of subjugation is central, Colin believes, to how we view intelligent technology and perhaps explains why the debate has been hijacked by concerns that it could, in fact, destroy society.

“Technology has always reshaped society and we tell ourselves stories to try to understand why and how,” he says. “Whether it’s how tools advanced prehistoric man or Capek's robots, or even the thinking, feeling computer programme with the dulcet tones of Scarlett Johansson from the film Her. These are stories that try to explain simply how our complex behavioural patterns adapt to technology.

“This is why philosophy is so vital to understanding technology. Look at Stanley Kubrick’s visionary film 2001: A Space Odyssey. Hal, the computer that takes control of the fatal mission at the end of the film, is the manifestation of the human fear of being subjugated by the very thing that we enslaved.

“We are forever grappling with changes in our history that are utterly transformative. This moment we are in is one of those – perhaps the greatest of all transformations. It's thrilling to be alive now, to see these extraordinary changes, to be so interconnected with each other and yet also interdependent, liberated by technology so that we are not so reliant on the power of banks, politicians or large corporations. Society has always adjusted to such transformations, history tells us so over and over again. And yet the debate we are having is often too negative, too fearful, too accusatory.

“We are forever grappling with changes in our history that are utterly transformative. This moment we are in is one of those – perhaps the greatest of all transformations. It's thrilling to be alive now, to see these extraordinary changes, to be so interconnected with each other and yet also interdependent, liberated by technology so that we are not so reliant on the power of banks, politicians or large corporations. Society has always adjusted to such transformations, history tells us so over and over again. And yet the debate we are having is often too negative, too fearful, too accusatory.

“Fears are being amplified because we are susceptible to viewing things in a very linear fashion but the relationship we are having, and will have, with technology is not linear. We need to be more constructive and collaborative and move beyond monochrome narratives.”

There has been a huge amount of coverage of the advances in cybernetics and “artificial” intelligence, often traduced to pithy soundbites hinting at portents of doom yet not too much rigorous intellectual debate, where ideas are tested and contested in an entertaining way.

“Hopefully this debate will make a significant contribution to our understanding of the technological advances that we take for granted and view both with awe and suspicion,” Williams says. “We need to get to grips with these ideas.”

The 50-year-old from Liverpool is an adviser to the Cyber Security Centre, where an interdisciplinary approach – sociological studies, philosophy, semiotics, morals, ethics, psychology and history – melds with computer research and mathematics into understanding how socio-technological systems can adapt and improve society.

“We don’t discount fear – it is a great stimulus after all. It’s what pulled us back from the brink of mutually assured destruction just a few decades ago. And that happened because our leaders talked to each other, they confronted their fears and figured out a way around it. They communicated. And that’s what we want to do. More than that, we need to. We need to exploit technology rather than deny it and, hopefully, break down the barriers.”

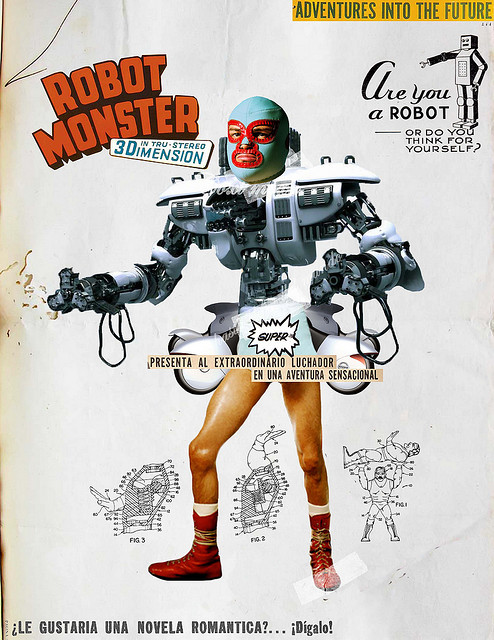

Images: Robot Monster by Javier Eduardo Piragauta Mora (via Flickr)

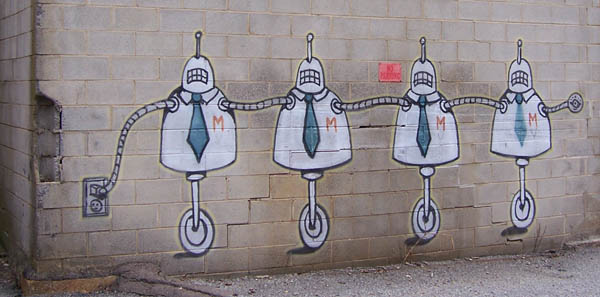

Robots by Justin Morgan (via Flickr)